For a few months we have all felt a little shaken in all areas of life and of course in regard to our work and associated workflows. Events with in person audiences have yet to be allowed back but artists and AV companies have found innovative ways to adapt.

One of the technologies that has come to the forefront in the AV sector is xR: Mixed or Extended Reality.

xR Stages are LED cubes or partial volumes, and may include for instance three sides of a cube, a surrounding lighting setup and a tracked camera environment. The cube and everything within it is recorded by the camera, which supplies its tracking information to a media server to then map a computer generated 3d scene into the LED walls from the same camera eye-point.

Real Time Render Engines

For content production at dandelion + burdock we have recently transitioned to using game engines such as Unreal and Unity that excel at world building and have broad resource pools. However there are other tools that are more suited for a faster turnaround and integration within a typical live performance situation. These requirements typically include cueing, media input or fast compile times. Notch is a tool that enables you to create interactive and video content in one unified real-time environment. Notch integrates directly into media servers and allows us to create interactive, generative content and live video effects in a powerful, easy and stable workflow.

Integration with disguise

Recently our team had the opportunity to be among the first to take advantage of the xR stage at disguise, London. disguise's set includes all the required elements for xR including: the LED stage, Lighting, Camera, Tracking System, and of course Media Server control.

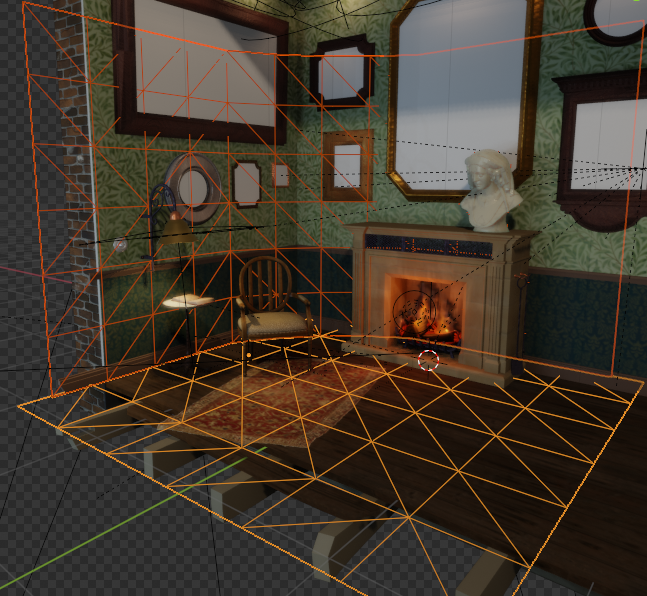

To nicely coincide with the Summer heatwave in London our test scene was a fireplace and reading room built in Notch. We made the scene as a counterpoint to a lot of modern and sleek looking designs but containing similar features to corporate setups, for example live streaming and programmed video. Another consideration for the fireplace was to allow a multitude of camera angles that highlight narrative and dialogue, beyond more traditional TV studio camera pick ups.

Notch & disguise

Notch blocks can be directly integrated into disguise. This workflow allows operators to see and control scene parameters and media can be connected easily on the fly.

Considering performance in xR is crucial; design teams need to feedback frequently with the AV team to improve the visual results while also managing render resources for best performance. While this process begins in the design studio, it is being conducted until the show or recording takes place, because changes and additions to the media server load can have knock on effects to real time performance.

A benefit in starting off with Notch was that we had been dry testing the block on our studio’s disguise designer machine. Ensuring the xR workflow was proven prior to the onsite loading of media.

While not representing the xR screens yet we saw all the behaviours and feedback from the virtual stage camera in advance.

When we received the xR studio's design template this was overlaid to the virtual room. The spatial mapping in disguise allows fast alignment of the block in the intended direction.

Virtual Props

In xR, virtual and analogue, worlds are usually at one to one scale. We wanted to keep the integrity of the fireplace design and therefore changed our original armchair design to match a real life prop.

We scanned, modelled and replaced our chair in the scene and created a Notch exposure to "render shadows" only for this prop. This way we could spike the real chair reliably for any camera take.

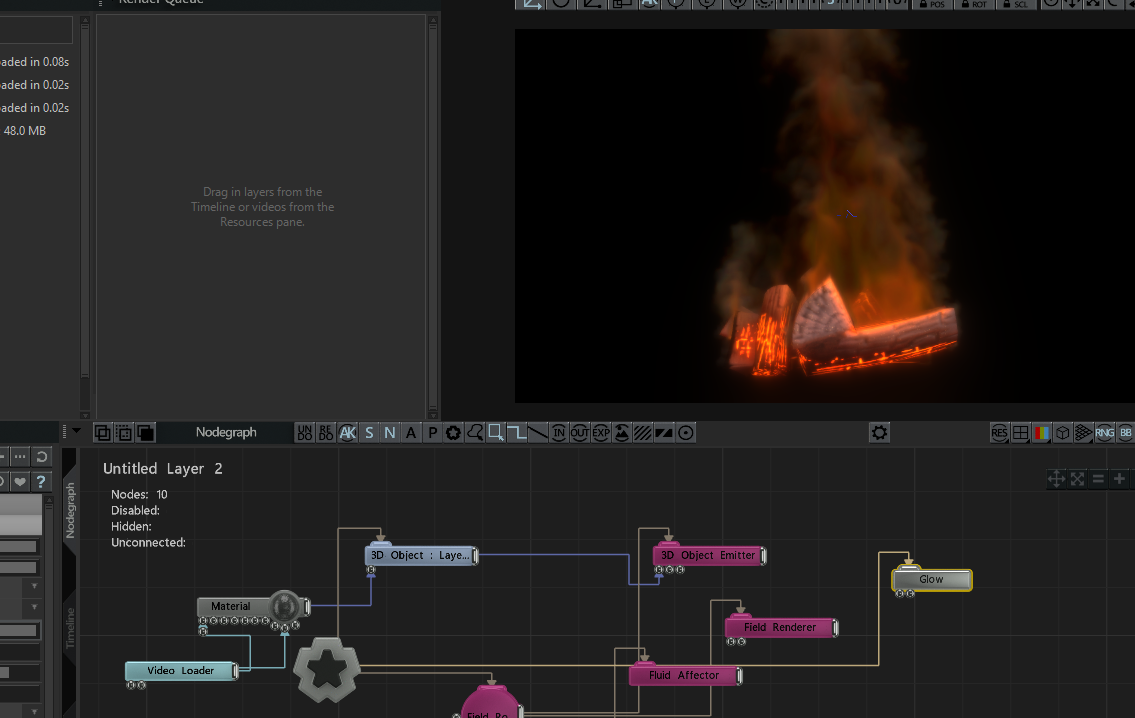

The integration of tracked cameras in xR demands performance overhead and breaking out parts of our design or simulations in Notch and compositing them in disguise does exactly that.

Fire Dynamics

Originally planned as a volumetric effect, we prepared our fire dynamics to also work as animated texture. We rendered the design in Notch and brought it into disguise as a regular video. The visual result was close to identical, and rewarded us with about 15fps improvement.

Previz: Previewing the Scene Online

Rendering performance is linked to considered design processes. These entail for example, limitation of active lights, texture and dynamic baking, and clean 3d topology. Since our team mostly follows these practices, the fireplace scene was readily transferable into a webGL format. This was imported into a Previz scene for previewing online.

Next Generation Storytelling

XR is proving that our studio can rely on real-time tools and rethink the approach to further content productions. Organic and interactive virtual landscapes open up opportunities for us to tell engaging stories in emotionally connected ways.